What is Software Testing? Definition, Types, and Tools

Software testing is the process of checking if software satisfies its expectations.

To test a software, testers execute it under controlled conditions, across a wide range of scenarios, environments, and user interactions to see if there are any defects arise during the process.

Benefits of Software Testing

-

Detect bugs: the primary goal of software testing is to identify bugs before they impact users. Since modern apps rely on interconnected components, a single issue can trigger a chain reaction. Early detection minimizes the impact.

-

Maintain and improve software quality: testing ensures the software is stable, secure, and user-friendly. It also identifies areas for improvement and optimization.

-

Build trust and satisfaction: consistent testing creates a stable and dependable product that delivers positive user experience, which translates into user trust and satisfaction.

-

Identify Vulnerabilities to Mitigate Risks: in high-stakes industries like finance, healthcare, and law, testing prevents costly errors that could harm users or expose companies to legal risks. It acts as a safety net, ensuring critical systems remain secure and functional.

Types of Software Testing

There are two major types of software testing:

- Functional testing checks if software features work as expected.

- Non-functional testing checks if the software's non-functional aspects (e.g., stability, security, and usability) satisfy expectations.

There are many other testing types:

- Unit testing checks an individual unit in isolation from the rest of the application. A unit is the smallest testable part of any software.

- Integration testing checks the interaction between several individual units. These units usually have already passed unit testing.

- End-to-end testing checks the entire end-to-end workflow in the software

- System testing checks the entire system, including its functional and non-functional aspects

- Exploratory testing is where testers explore the software without any predefined goals, trying to find bugs spontaneously.

- Visual testing checks if the software visual aspect satisfies expectations.

- Regression testing checks if new code breaks existing features

- UI testing checks if the User Interface (UI) satisfies expectations.

- Black-box testing is where testers check the software without knowing its code structures

- White-box testing is where testers check the software with full knowing of its code structures.

- Cross-browser testing checks if the software works across browsers and environments.

- Acceptance testing evaluates the application against real-life scenarios.

- Performance testing checks if the software can perform under stress (high user volume/extreme usage).

Approach to Software Testing

Testers have two approaches to software testing: manual testing vs automation testing. Each approach carries its own set of advantages and disadvantages that they must carefully consider to optimize the use of resources.

-

Manual Testing: Testers manually interact with the software step-by-step exactly like how a real user would to see if there are any issues coming up. Anyone can start doing manual testing simply by assuming the role of a user. However, manual testing is really time-consuming, since humans can't execute a task as fast as a machine, which is why we need automation testing to speed things up.

-

Automation Testing: Instead of manually interacting with the system, testers leverage software using tools or write automation scripts that interact with the software on their behalf. The human tester only needs to click the “Run” button and let the script do the rest of the testing.

Important Concepts in Software Testing

- Test Case – A set of conditions, inputs, and expected outputs designed to test a specific software function.

- Traceability Matrix – A document linking test cases to requirements to ensure full coverage.

- Test Script – An automated or manual step-by-step procedure for executing test cases.

- Test Suite – A collection of test cases designed to evaluate multiple aspects of a software system.

- Test Fixture (Test Data) – Predefined data and conditions used for testing consistency.

- Test Harness – A combination of software and test data used to test components in an isolated environment.

- Bug/Defect Life Cycle – The process a defect goes through from discovery to resolution.

- Severity vs. Priority – The difference between the impact of a bug (severity) and how urgently it needs to be fixed (priority).

- Test Environment – The setup of hardware, software, databases, and networks required to execute tests.

- Test Plan vs. Test Strategy – The test plan is a detailed document outlining test execution, while the test strategy is a higher-level approach to testing.

- Test Scenario – A high-level description of a real-world use case that needs to be tested.

- Test Data Management – The practice of creating, maintaining, and managing test data sets.

- Mocking and Stubbing – Simulating dependencies (APIs, databases, services) for isolated testing.

- Code Coverage – A metric that measures how much of the application's code has been tested.

- Smoke Testing vs. Sanity Testing – Smoke tests ensure basic functionality, while sanity tests verify specific fixes or updates.

- Edge Cases and Boundary Testing – Testing the extreme limits of input values in the system.

- Defect Reporting – The structured process of documenting and tracking software defects.

- Test Automation Frameworks – A structured set of guidelines for automated testing (e.g., data-driven, keyword-driven, hybrid).

- Continuous Testing in CI/CD – The practice of integrating automated tests into the software development pipeline to catch defects early.

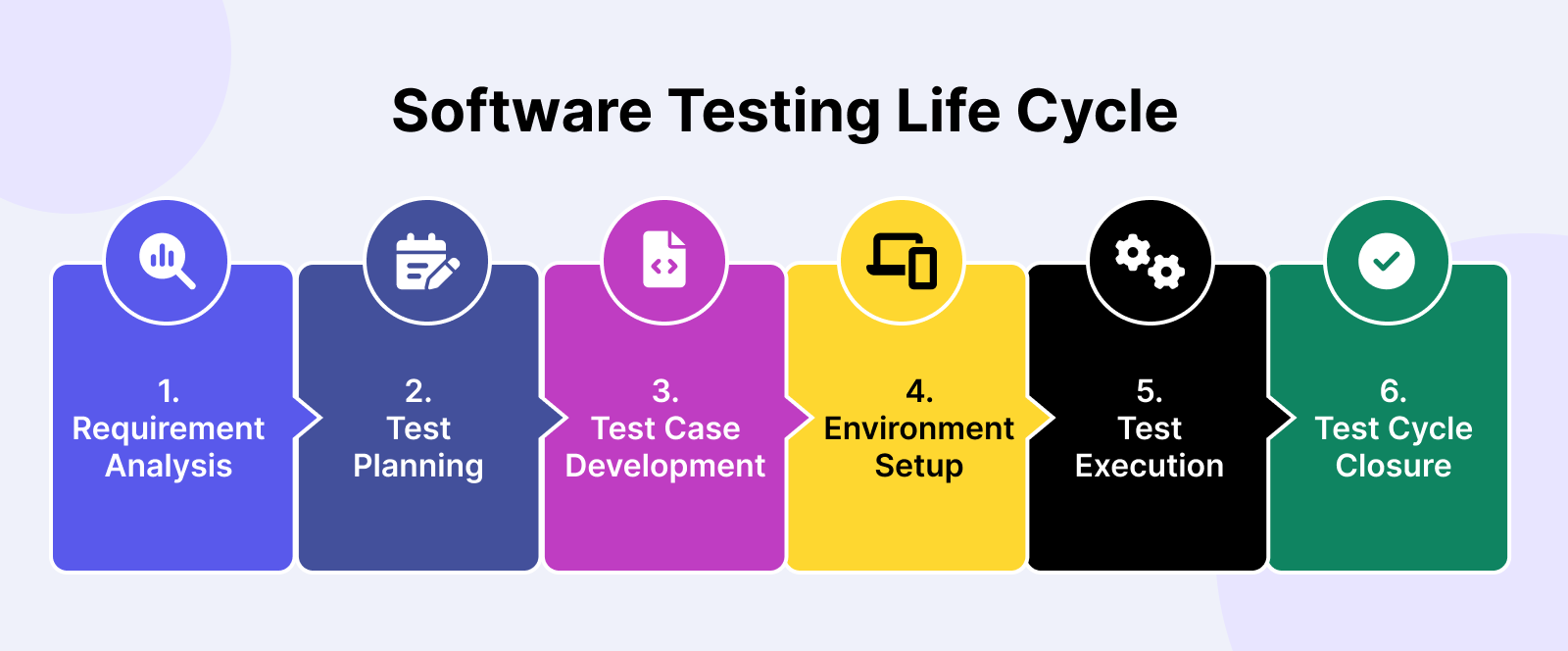

Software Testing Life Cycle

Many software testing initiatives follow a process commonly known as Software Testing Life Cycle (STLC). The STLC consists of 6 key activities to ensure that all software quality goals are met, as shown below:

1. Requirement Analysis

In this stage, software testers work with stakeholders involved in the development process to identify and understand test requirements. The insights from this discussion, consolidated into the Requirement Traceability Matrix (RTM) document, will be the foundation to build the test strategy.

There are 3 main people (the tres amigos) involved in the process:

- Product Owner: Represents the business side and wants to solve a specific problem.

- Developer: Represents the development side and aims to build a solution to address the Product Owner's problem.

- Tester: Represents the QA side and checks if the solution works as intended and identifies potential issues.

2. Test Planning

After thorough analysis, a test plan is created. Test planning involves aligning with relevant stakeholders on the test strategy:

- Test objectives: Define attributes like functionality, usability, security, performance, and compatibility.

- Output and deliverables: Document the test scenarios, test cases, and test data to be produced and monitored.

- Test scope: Determine which areas and functionalities of the application will be tested (in-scope) and which ones won't (out-of-scope).

- Resources: Estimate the costs for test engineers, manual/automated testing tools, environments, and test data.

- Timeline: Establish expected milestones for test-specific activities along with development and deployment.

- Test approach: Assess the testing techniques (white box/black box testing), test levels (unit, integration, and end-to-end testing), and test types (regression, sanity testing) to be used.

3. Test Case Development

After defining the scenarios and functionalities to be tested, we'll start writing the test cases.

Here's what a basic test case looks like:

|

Component |

Details |

|

Test Case ID |

TC001 |

|

Description |

Verify Login with Valid Credentials |

|

Preconditions |

User is on the Etsy login popup |

|

Test Steps |

1. Enter a valid email address. 2. Enter the corresponding valid password. 3. Click the "Sign In" button. |

|

Test Data |

Email: validuser@example.com Password: validpassword123 |

|

Expected Result |

Users should be successfully logged in and redirected to the homepage or the previously intended page. |

|

Actual Result |

(To be filled in after execution) |

|

Postconditions |

User is logged in and the session is active |

|

Pass/Fail Criteria |

Pass: Test passes if the user is logged in and redirected correctly. Fail: Test fails if an error message is displayed or the user is not logged in. |

|

Comments |

Ensure the test environment has network access and the server is operational. |

This is a test case to check Etsy's login.

When writing a test case, make sure your test cases clearly show what’s being tested, what the expected outcome is, and how to troubleshoot if bugs appear.

After that comes test case management, which is simply tracking and organizing your test cases. You can do this with spreadsheets or tools like Xray for manual tests or use automation tools like Selenium, Cypress, or Katalon for faster results.

4. Test Environment Setup

Setting up the test environment involves preparing the software and hardware needed to test an app, like servers, browsers, networks, and devices.

For a mobile app, you’ll need:

-

Development environment for early testing:

- Tools like Xcode (iOS) or Android Studio (Android)

- Simulators/emulators for virtual testing

- Local databases and mock APIs

- CI tools to run automatic tests

-

Physical devices to catch real-world issues:

- Different models (e.g., iPhone, Galaxy)

- Various OS versions (e.g., iOS 14, Android 11)

- Tools like Appium for automated testing

-

Emulation environment for quick tests without physical devices:

- Android emulators and iOS simulators

- Various screen resolutions, RAM, and CPU configurations

- Debug tools in Xcode or Android Studio

5. Test Execution

With clear objectives in mind, the QA team writes test cases, test scripts, and prepares necessary test data for execution.

Tests can be executed manually or automatically. After the tests are executed, any defects found are tracked and reported to the development team, who promptly resolve them.

During execution, the test case goes through the following stages:

- Untested: The test case has not been executed yet at this stage.

- Blocked/On hold: This status applies to test cases that can’t be executed due to dependencies like unresolved defects, unavailable test data, system downtime, or incomplete components.

- Failed: This status indicates that the actual outcome didn’t match the expected outcome. In other words, the test conditions weren’t met, prompting the team to investigate and find the root cause.

- Passed: The test case was executed successfully, with the actual outcome matching the expected result. Testers love to see a lot of passed cases, as it signals good software quality.

- Skipped: A test case may be skipped if it’s not relevant to the current testing scenario. The reason for skipping is usually documented for future reference.

- Deprecated: This status is for test cases that are no longer valid due to changes or updates in the application. The test case can be removed or archived.

Read More: A Guide To Understand Test Execution

6. Test Cycle Closure

Finally, you need a test report to document the details of what happened during the software testing process. In a test report, you can usually see 4 main elements:

- Visualizations: Charts, graphs, and diagrams to show testing trends and patterns.

- Performance: Track performance trends like execution times and success rates.

- Comparative analysis: Compare results across different software versions to identify improvements or regressions.

- Recommendations: Provide actionable insights on which areas need debugging attention.

Software testers will then gather to analyze the report, evaluate the effectiveness, and document key takeaways for future reference.

Popular Software Testing Models

The evolution of the testing model has been in parallel with the evolution of software development methodologies.

1. V-model

In the past, QA teams had to wait until the final development stage to start testing. Test quality was usually poor, and developers could not troubleshoot in time for product release.

The V-model solves that problem by engaging testers in every phase of development. Each development phase is assigned a corresponding testing phase. This model works well with the nearly obsolete Waterfall testing method.

On one side, there is “Verification”. On the other side, there is “Validation”.

- Verification is about “Are we building the product right?”

- Validation is about “Are we building the right product?”

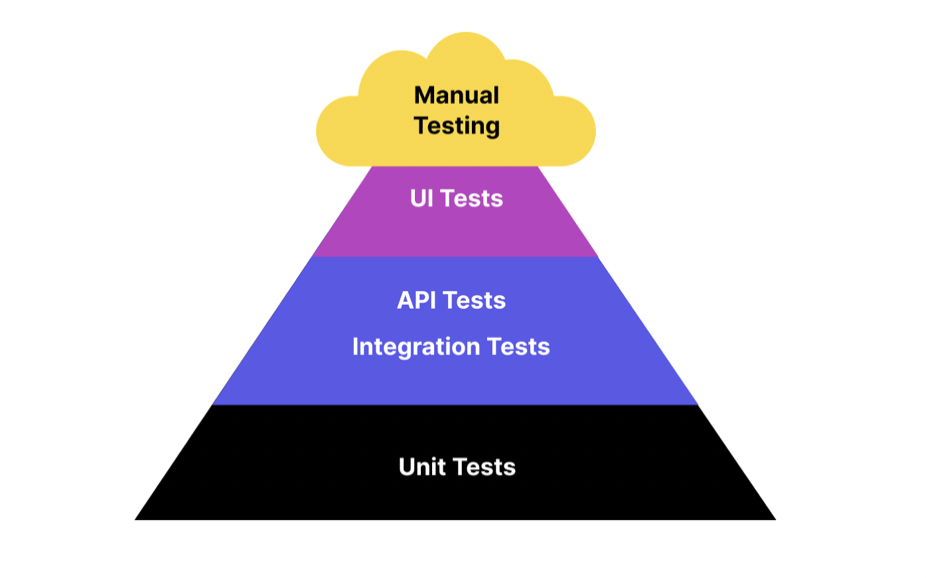

2. Test Pyramid model

As technology advances, the Waterfall model gradually gives way to the widely used Agile testing methods. Consequently, the V-model also evolved to the Test Pyramid model, which visually represents a 3-part testing strategy.

Most of the tests are unit tests, aiming to validate only the individual components. Next, testers group those components and test them as a unified entity to see how they interact. Automation testing can be leveraged at these stages for optimal efficiency.

3. The Honeycomb Model

The Honeycomb model is a modern approach to software testing in which Integration testing is a primary focus, while Unit Testing (Implementation Details) and UI Testing (Integrated) receive less attention. This software testing model reflects an API-focused system architecture as organizations move towards cloud infrastructure.

Manual Testing vs. Automated Software Testing: Which One to Choose?

|

Aspect |

Manual Testing |

Automation Testing |

|

Definition |

Testing conducted manually by a human without the use of scripts or tools. |

Testing conducted using automated tools and scripts to execute test cases. |

|

Execution Speed |

Slower, as it relies on human effort. |

Faster, as tests are executed by automated tools. |

|

Initial Investment |

Low, as it primarily requires human resources. |

High, due to the cost of tools and the time required to write scripts. |

|

Accuracy |

Prone to human error, especially in repetitive tasks. |

More accurate, as it eliminates human error in repetitive tasks. |

|

Test Coverage |

Limited by human ability to perform extensive and repetitive tests. |

Extensive, as automated tests can run repeatedly with large data sets. |

|

Usability Testing |

Effective, as it relies on human judgment and feedback. |

Ineffective, as tools cannot judge user experience and intuitiveness. |

|

Exploratory Testing |

Highly effective, as humans can explore the application creatively. |

Ineffective, as it requires human intuition and exploratory skills. |

|

Regression Testing |

Time-consuming and labor-intensive. |

Highly efficient, as tests can be rerun automatically with each code change. |

|

Maintenance |

Lower, but can become tedious with frequent changes. |

Requires significant maintenance to update scripts with application changes. |

|

Initial Setup Time |

Minimal, as it does not require scripting or tool setup. |

High, due to the need to develop test scripts and set up tools. |

|

Skill Requirement |

Requires knowledge of the application and testing principles. |

Requires programming skills and knowledge of automation tools. |

|

Cost Efficiency |

More cost-effective for small-scale or short-term projects. |

More cost-effective for large-scale or long-term projects with repetitive tests. |

|

Reusability of Tests |

Limited, as manual tests need to be recreated each time. |

High, as automated tests can be reused across different projects. |

Read More: Automated Testing vs Manual Testing: A Detailed Comparison

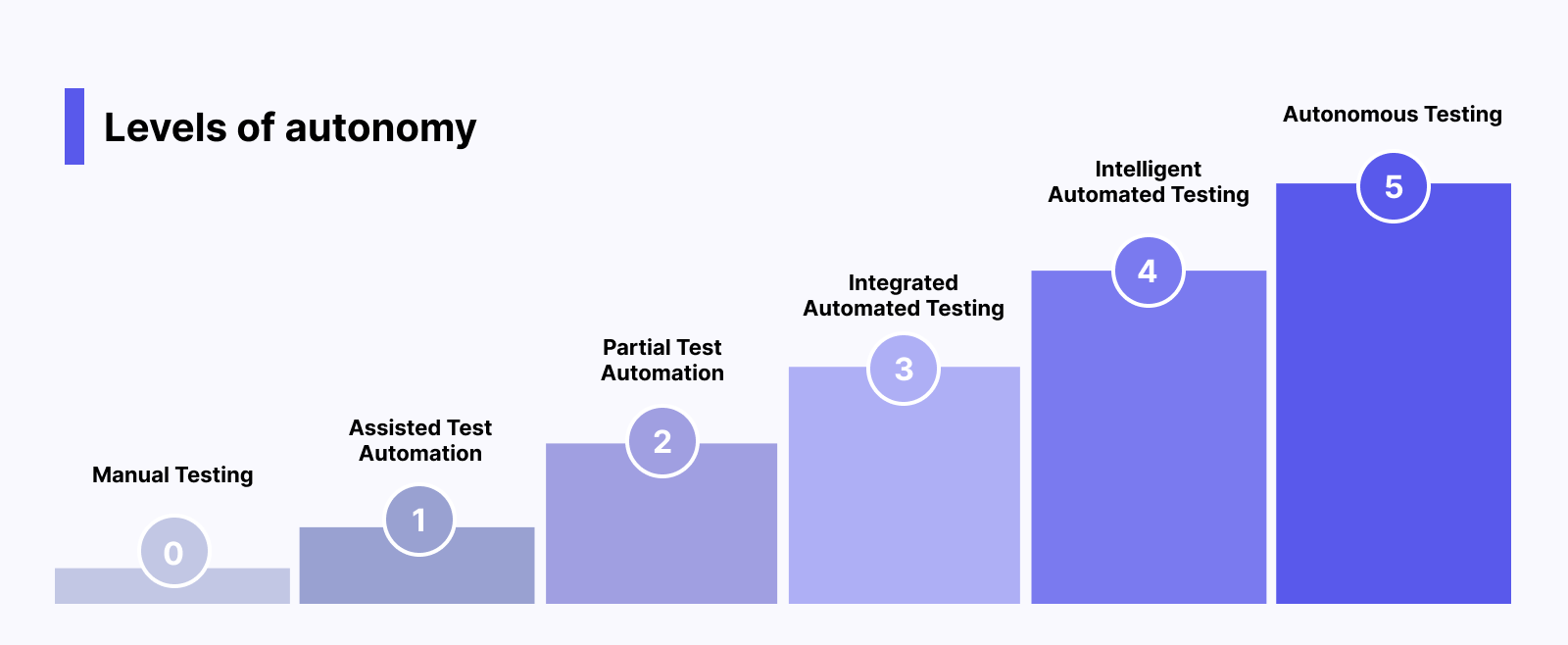

Is Automated Testing Making Manual Testing Obsolete?

Automated testing takes software testing to the next level, enabling QA teams to test faster and more efficiently. So is it making manual testing a thing of the past?

The short-term answer is “No”.

The long-term answer is “Maybe”.

Manual testing is always needed because only humans can evaluate the application’s UX and supervise automation testing.

However, AI technology is gradually changing the landscape. Smart testing features have been added to many automated software testing tools to drastically reduce the need for human intervention.

In the future, we can expect to reach Autonomous Testing, where machines completely take control and perform all testing activities. Many software testing tools have leveraged LLMs to bring us closer to this autonomous testing future.

Top Software Testing Tools with Best Features

1. Katalon

Katalon allows QA teams to author web, mobile, and desktop apps and UI and API automated tests, execute those tests on preconfigured cloud environments and maintain them, all in one unified platform, without any additional third-party tools. The Katalon Platform is among the best commercial automation tools for functional software testing on the market.

- Test Planning: Ensure alignment between requirements and testing strategy. Maintain focus on quality by connecting TestOps to project requirements, business logic, and release planning. Optimize test coverage and execute tests efficiently using dynamic test suites and smart scheduling.

- Test Authoring: Katalon Studio combines low-code simplicity with full-code flexibility (this means anyone can create automation test scripts and customize them as they want). Automatically capture test objects, properties, and locators to use.

- Test Organization:TestOps organizes all your test artifacts in one place: test cases, test suites, environments, objects, and profiles for a holistic view. Seamlessly map automated tests to existing manual tests through one-click integrations with tools like Jira and X-ray.

- Test Execution: Instant web and mobile test environments. TestCloud provides on-demand environments for running tests in parallel across browsers, devices, and operating systems, while handling the heavy lifting of setup and maintenance. The Runtime Engine streamlines execution in your own environment with smart wait, self-healing, scheduling, and parallel execution.

- Test Execution: Real-time visibility and actionable insights. Quickly identify failures with auto-detected assertions and dive deeper with comprehensive execution views. Gain broader insights with coverage, release, flakiness, and pass/fail trend reports. Receive real-time notifications and leverage the 360-degree visibility in TestOps for faster, clearer, and more confident decision-making.

Download Katalon and witness its power in action

Check out a video from Daniel Knott - one of the top influencers in the software testing field - talking about the capabilities of Katalon, and especially its innovative AI features:

2. Selenium

Selenium is a versatile open-source automation testing library for web applications. It is popular among developers due to its compatibility with major browsers (Chrome, Safari, Firefox) and operating systems (Macintosh, Windows, Linux).

Selenium is a versatile open-source automation testing library for web applications. It is popular among developers due to its compatibility with major browsers (Chrome, Safari, Firefox) and operating systems (Macintosh, Windows, Linux).

Selenium simplifies testing by reducing manual effort and providing an intuitive interface for creating automated tests. Testers can use scripting languages like Java, C#, Ruby, and Python to interact with the web application. Key features of Selenium include:

- Selenium Grid: A distributed test execution platform that enables parallel execution on multiple machines, saving time.

- Selenium IDE: An open-source record and playback tool for creating and debugging test cases. It supports exporting tests to various formats (JUnit, C#, Java).

- Selenium WebDriver: A component of the Selenium suite used to control web browsers, allowing simulation of user actions like clicking links and entering data.

Website: Selenium

GitHub: SeleniumHQ

3. Appium

Appium is an open-source automation testing tool specifically designed for mobile applications. It enables users to create automated UI tests for native, web-based, and hybrid mobile apps on Android and iOS platforms using the mobile JSON wire protocol. Key features include:

- Supported programming languages: Java, C#, Python, JavaScript, Ruby, PHP, Perl

- Cross-platform testing with reusable test scripts and consistent APIs

- Execution on real devices, simulators, and emulators

- Integration with other testing frameworks and CI/CD tools

Appium simplifies mobile app testing by providing a comprehensive solution for automating UI tests across different platforms and devices.

Website: Appium Documentation

Conclusion

Ultimately, the goal of software testing is to deliver applications that meet and exceed user expectations. A comprehensive testing strategy is one that combines the best of manual and automation testing.

FAQs On Software Testing

1. What is the most common type of software testing?

The most common type is functional testing, which checks if the software works as intended. Other popular types include unit testing (by developers) and regression testing (to ensure new changes don’t break existing features). The testing type depends on the project needs.

2. Do software testers do coding?

It depends. Manual testers usually don’t need coding. Automation testers do, using tools like Selenium or Cypress to write test scripts. While coding isn’t mandatory for all testers, it’s becoming increasingly important in automation roles.

3. Which methodology is best for software testing?

Agile is the most widely used as it supports continuous testing and fast feedback. Waterfall suits projects with fixed requirements. DevOps is great for CI/CD pipelines. The best choice depends on the project.

4. What is the SDLC in software testing?

SDLC (Software Development Life Cycle) outlines steps from idea to release, including testing at every stage to ensure quality. Testing happens in phases like requirement analysis, development, testing, and maintenance.

5. Is QA an IT job?

Yes, QA is an IT job that ensures software meets quality standards. QA roles involve both technical skills (like using testing tools) and soft skills (like attention to detail). Automation QA roles are more technical.

6. Does QA require coding?

Manual testing doesn’t require coding. Automation testing does, as testers write scripts to automate tests. While coding isn’t always needed, it’s becoming a valuable skill for QA professionals.